Morag Watson, Digital Library Development Manager, Edinburgh University Library

Morag will be talking about Open Journal Software (OJS).

We bid for the Repository Enhancement Strange JISC strand as Corpus:

- We partnered with st andrews.

- The project was to do a review of software.

- User surveys were also planned to be part of the work.

- The project would look at OJS and commercial softwares like ScholarOne and Bepress.

- OJS is a Public knowledge project.

- It is widely used.

- c. 2000 journals already registered but many have not published many issues.

- Open access publishing platform.

- Locally installed and locally controlled - this is a big benefit for us in terms of what you can do with the journal and how you control it.

Software is licenced under a GNU public licence.

- There is lots of support and documentation

- Very active support forums

- Developer forum

- Video tutorials

- OJS in an hour (although it's 178 pages!)

- Active and involved worldwide community.

So what does OJS do?

- A journal management and publishing system - from author submission to editorial control and formating.

- Policies, processes etc. can all be set by the journal editors.

- Full indexing for searching inside the system and also indexed by Google.

- Can add keywords and geotags.

- Very low cost to access.

- Lots of journals in Africa use OJS - it is a helpfully low bandwidth system.

- You can have reader registration. And you set up journal subscription in the system.

You click through the screen and when you're finished you've got a journal. Very easy and very menu driven. And it's easy to drag and drop stuff into the system. You do not need to be technical to use it.

It has a very flexible design (via CSS) for single and multiple journals. You can have one design for all journals or different designs for each journal you publish. You can add audio and video etc. One of the journals we're working with wants to add these types of elements in fact.

We've chosen a flexible design approach but some publishers on OJS reuse templates. Common templates can be reused to save effort. But there is an issue with users and academics wanting/not wanting a common design. If you do very customised designs you may need multiple instances of OJS.

Edinburgh is running a one year pilot implementation (from March 2009). Currently 2 journals: Critical African Studies (launched in May 2009) and Concept (launching October 2009). Critical African Studies was previously in print but there had been no issues for a while so the online version revives it.

We have branded OJS as part of the Edinburgh University Library website. Editors pick logos, abstracts etc. that then show up on the page.

The journal itself fits with what had already been publicised. Mostly formatted through templates and CSS to format. We support the tools and training and set up but not the day to day running of the service.

Morag demos the system as a user who has a Journal Manager role. There are lots of roles that can be set up ut you can turn off a number of these roles to make things simpler. You can have it set up for one person across the whole journal. The system emails users whenever items for review come in. All items are held in the system so if something changes or someone leaves all that data is safely stored. You can also set up future issues in advance quite easily. A lot of nice management features.

Pretty straightforward to use. There is also the possibility of RSS as part of the system. Also you don't have to stick to strict publishing schedules. RSS means people don't miss the next article even if publication schedules are changables.

Implementation Issues

- Software setup - we are trying out one installation for all the journals.

- Workflow for journals are understood by academics but managing that from an electronic system is different and we now have a nice template for this.

- Interface configuration.

- Who owns the data? The journal? The academic? The library? The institution? It's a tricky balancing issue.

What next?

- More journals. We haven't been publicising this but lots of enquiries have been coming in at this stage all the same.

- Also looking at Institutional Journals (e.g. Current research in Edinburgh via repository),

- Integration with the Institutional Repository - some work in Australia looks at this and we'll be taking a look at this shortly.

- Planning/costing for live services.

- SDLC - other organisations in Scotland may want to offer this...

Q & A

Q (Richard Jones): On repository integration: can you only put the content in the repository or do you have to duplicate the data in both systems?

A (Morag): We need a way to publish data from those outside our institution as well and that wont go into our institutional repositories so right now it is a matter of us wanting to back up what we want.

Q (Jo Walsh): You mentioned costings for a live service - does OJS take payments?

A (Morag): OJS hasn't charged us for anything yet. You can subscribe but not pay for journals. And no one we've been working with wants payments. As far as I know OJS doesn't mandate that and we're not interested in doing that.

Q: What formats can you publish in? HTML only? Or PDFs, docs etc.?

A: HTML and pdf are supported. you can submit in any format but have to publish as either pdf or webpage.

Q (Les): Is this for lo-fi basic things or could there be some Nature competitors using OJS

A: Here we are interested in the lo-fi stuff right now. But there are some bigger journals in the US that probably use a lot more functionality. Most of our academics want to switch off a lot of the additional features. We've only worked with 3 departments so far.

Les asks the crowd a question: How many folk have their own publications in the university? Only two people: OUP (which wouldn't use OJS) and UKOLN (who publish an international journal with OJS).

Morag: How much of the review process you want is important to how you set this stuff up.

Les: We find dissemination is the thing many of our acadamics want but I think we should look at this stuff as a complementary service.

Hugh Glaser and Ian Millard: RKB, sameAs and dotAC

Hugh starts by telling us: "This is all very exciting to me. It's a community I don't usually engage with and it's all very exciting - there's so much data - I want to eat it all!"

Hugh's work wasn't originally funded by JISC but this work is now being supported by the Rapid Innovation fund.

Hugh's work wasn't originally funded by JISC but this work is now being supported by the Rapid Innovation fund.Linked Data - Tim Berners Lee says it's the Semantic Web done right and the Web done right. This is linking open data and the idea is that you can name things. Once that's done you can use and work with them regardless of what those things are.

- Use URIs as names for things

- HTTP URIs so that people can look up those names

- When you look up the URI, provide useful information

- include links to other URIs for further discovery.

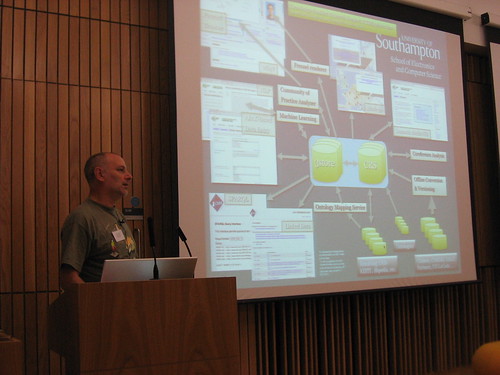

Hugh is showing an amazingly complex data architecture image of Southampton's system which links faceted browsing of multiple knowledge bases, ontology mapping etc. Sometimes the system goes out to find connecting data.

Hugh is also showing a serious of source links and knowledge sources - it's a wide range and he warns us: "don't just look for what you expect to find". To follow up we have a hugely complex web of materials.

The interface to the system is available to view on the web at RFBexplorer.com - connections appear in this system from the semantics of the linked data. So you can track the connections. The interface uses loads of background services to deliver the service. Some people publish usable stuff. Others might want to use our RESTful outputs. You can use these URIs as part of applications or webpages etc. to pull together lots of information.

A postgraduate in chemistry who had done Google Gadgets before made a few gadgets with the RFB service. We are also thinking about doing something similar with the iPhone so you can, for instance, search around and get a sense of who you meet when you are at conferences.

The system

Concluding remarks

We gather data from all the e-prints today and from other systems tomorrow You should worry about your identifiers - can you reliably match your publications to a consistent author id?

dotAC.info takes the previous work forward with a very UK focus. We'll do the geographic side of it, better co-reference, connect to live project databases etc. It will change the face of research in the UK and how it's developing.

Q & A

Q: Is there a REST interface /API and what's the URL for it?

A: The demos page is the best place to go but I'm currently working on better documentation and will probably put that on SourceForge. It's mostly quite simple but then you might want to get it back in different formats. We want to link in the linked data world but we want to be able to pull stuff out of that world too.

Q (Robin Taylor, UoE): What do you mean by ontologies?

A: I mean structured descriptive data standards like Dublin Core. We can cope with multiple ontologies but using a standard one can be a huge benefit to getting information out of systems and making the best use of linked data. It's nice if it's more powerful than Dublin Core but standard ontologies are the key thing.

Hugh: We are very very keen to get input on the the dotAC project. Please drop us an email.

Q (Les): What benefit can we get back from your project?

A: One benefit is quite direct: we will be able to link data in your repository to project data and other data on the web around the world. An interesting thing is: what's the vision of the interesting stories or uses of this data you could make? It's not about the journal articles - there are lots of other possibilities and emergent properties. This is a new world and you need ideas and visualizations to see where it's going next. This is why we're pushing the service side of things:

Q: Where you have countries harvesting repositories into one place: if there isn't a consistent naming strategy you have a problem of duplication. Your service could help with this I think.

A: Yup we can link things and aggregate them. We aren't talking about this as a naming authority though. You can have your URIs and manage those. Other people do their own thing. The service then decides whether to believe your relationship to those URIs or someone elses. This adds some reliability to search.

Gavin

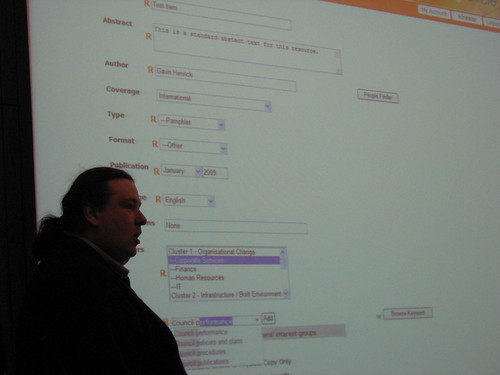

Enovation is a company that supports multiple types of repositories for various organizations so we do things like developing the UI.

So for Trinity COllege Dublin for instance Enovation took DSpace and added:

- Two-way integration to student research system.

- Custom e-theses workflow from submission to printing.

- Hiding empty collections.

- Enhanced advanced search.

- Integration extended of the TAPIR code to cater for TCD licensing and restrictions.

- Site was a CMS with document management plug-in.

- Wanted a more strictly controlled repository with set meta data requirements and simple processes.

- Specific design and user interface changes.

- Streamlined submission process.

- Much improved browsing experience.

Make adding items (deposit) simpler with a tree based category selection, a simpler submit page encompassing all aspects on one page.

Make adding items (deposit) simpler with a tree based category selection, a simpler submit page encompassing all aspects on one page.- Standardize the author names - implement a find people interface connected to central user system.

- Standardize keywords - implement a taxonomy driven lookup with pre-checks keywords.

- Make adding news/event information easier - embedding an HTML editor for news areas and we added news and events blogs to the site.

There is a slightly different dynamic to this use of a repository but DSpace is being used effectively for what they want. It's not a full CMS but the WYSIWYG news editor makes a big difference to usability and flexibility. None of the IR managers we've dealt with can write HTML but the interface forces you to do that. So the standard WYSIWYG approach we've added here works far better.

SDCC came with exact specifications so we tailored DSpace to meet that. The homepage is lively and bright to look at and looks pretty unlike DSpace - and required quite a lot of development. This site isn't available publicly but it is a really useful system for the 50,000 staff to use internally. Giving numbers of items in each section makes navigation quicker and easier.

Various projects Enovation are currently working on (but can't unfortunately be named at this stage) include:

A Digital Learning Object Repository which will include:

- Recommend a resource (positive system only - things will be more recommended than others).

- Add a review/comment.

- Subject based communities of practice (using Mehara).

- Use of Escidoc front end.

- Customized services.

Social Studies "Dark Archive"

- Preserve original image formats internally.

- Generate and publish web formats.

- Export of system to open access repository.

- Link to map server for rendering.

Open Access

- Harvester for the National funded project.

- Linking seven universities in Ireland.

Q & A

Q: What is the take home message here? Do all these repository systems have problems that repository developers should look at?

A: Well, as was said this morning, when IRs were thought of they were thought of by developers for admins and managers NOT for researchers and you really need them to suit researchers. This is a marketing tool in many ways so it has to be good and simple and fit with the wider web interfaces much better.

Q: One question from 2 angles. With my DSpace hat on: is this an XML UI or a customized UI? And are you going to get code back into DSPace?

A: Yes, we are in talks to do this. This is a customized UI. Some parts can be easily added. Things like the people finder would be an obvious thing to add to more repositories and would be easy to break out.

Q (Les): You make things better for researchers but the researchers don't commission this sort of work - it's the managers or the librarians...

A: Researchers can be core to the UI needs: for Trinity College Dublin the visionary management let it be user led. The Learning Circle site was also user driven.

Fred Howell, TextSensor:

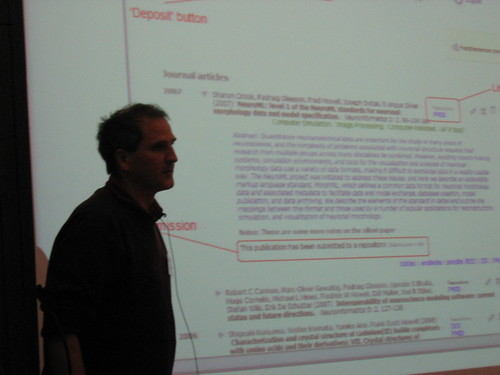

- The idea was a project to connect publicationslist.org to the Depot, a nationwide repository being run by EDINA.

- Researchers care about their own web page but they don't want to fill in metadata forms.

- So we started from the point of seeing how we could make a personal home page really easy. And once that's been done maybe we can connect that to the repository.

There is an automated process that detects your publications and whether or not they have been deposited, lets you do simple SWORD deposit and pressing the button deposits the item.

Initially we thought that SWORD would solve all problems. But as Richard mentioned earlier it is tough to change anything once deposited with SWORD so we had to do some additional API work. So the status of the items is checked and, based on timestamps, will redeposit an item as needed.

APIS required:

- Initial single item deposit - SWORD (with json-bibtex metadata).

- Send updates/new version of metadata.

- Check status of deposited items.

- Search for authors in repositories.

- Make the process simple and automated.

One of the things we have built in is a PubMed search that lets you find your papers. Once you have a publications list you click deposit - and this creates an instant account on the depot. YOu then click to send the articles to the repository. We haven't typed anything in but you have deposited your papers etc. So part one of the process is great. But then you've reached the limits of SWORD. Would be great to build updating etc. into future iterations of SWORD.

So we felt that academics and researchers don't stay in one institution so what happens when they move to new academic institutions. So we move to a model of "here are my publications with an Atom feed (or similar) and this feeds all the relavant repositries.

PULL is better than PUSH because it:

- Removes the need for researchers to do anything extra to deposit.

- Is much simpler for publications list providers to support.

- And it's so much easier to hit multiple repositories at once.

Q & A

Q(Jim downing Uni of Cambridge): Have you had people move institutions yet? I can see far more issues being raised than just where the files are.

A: we have people who have moved institutions on publications list but this EMLoader service has not been tried as a live service yet.

Les: It is really exciting to see these problems being looked at and tackled!

Comment (Peter Burnhill): I wanted to point out that Fred and I met on the internet. A Blogger in Ohio reviewed publicationslist.org and the depot and suggested they should be connected and that's what actually kicked off this whole process. By weird coincidence both Publicationslist and EDINA were in the same city anyway. We were mated on the internet by a blogger in Ohio!

Q: If something changes something at the web end it changes in the repository but does it work in the other direction?

A: No, we have only done unidirectional but it would be good to do the two way thing.

Daniel Hook, Symplectic

So what we have done is automatic aggregation of data from key data sources to automatically generate lists of publications. There is a balance to be found between all data sources and useful ones. We also let people search Google Books to find publications. The idea is to minimise manual data input for these webpages.

Ben and Sally talked this morning about disproportional feedback and this is a great thing to have for academics. We need to make pages so much more useful than what they are used to. We need citation and usage statistics and we're working with Thomson and Scopus to get at this sort of data. There is also the matter of research strategy. There is so much bibliographic data that can be used to show the bibliographic strengths of the institution automatically.

So this is a demo showing lots of different items - books, chapters, conferences, journals etc. - which can be approved or not depending on whether that aggregation has found your work. The idea is any sort of RAE/REF item can be grabbed.

So the system searches for various terms - you can set up default search terms, check settings etc. so that your persona can be used to find your work essentially (although in this demo it is different settings for each repository/data source). Additionally categories are key in archives so you can restrict your search to expected categories. We talked about URIs and IDs so you can use your archive identifyer as one of your settings.

The search looks for publications regularly and alert you if/when items are found so that you can approve (or not) of them. Approved items may include multiple copies - perhaps a manual source and an automatic Web of Science data. You can add a manual link to override any data errors.

You can also see if other authors have a approved an article. And this means we have a co-author list for the service and can see who has worked with who.

The pluses of this system are:

- Easy reference list of your work when it comes to filling in grant applications etc.

- It does feed a repository but searching is quite different as you can have a wider range of material represented here.

- You can find articles by text found in organisational papers so if you are looking for possible collaborators you have a good starting point to finding out more and making contact. It's a useful tool to browse the organisation as well as helping you find collaborators.

There are a few wrinkles: you can deposit articles from other authors. Physics are a bad subject for repositories as researchers have often already deposited in archive.org.

Finally Daniel shows us a diagram of the organisation connecting collaborators together. There is much potential to see the organisational research strategy. Visualisations will be going in the next version of the system.

Q (Les Carr): Yes there is a lot of research intelligence bound up in repositories that we need to harvest and look at. How many of you are working on this sort of service - do you see yours connecting to this service or as stand alone services?

A (Daniel): Richard will talk more about Symplectic tomorrow at his tutorial. He will be talking about Sword and Atom and how those map onto Symplectic and other digital repositories connect to content. And how to deal with the layers of processes in front of repositories.

Daniel's presentation concluded the Show and Tell Sessions for the day so we ajourned to the Atrium for a drinks reception. The winning Pecha Kucha was also announced to be Julian Cheal of UKOLN and he gamely modeled his prize and glittery cowboy hat (the sticker you can just see shows his name and talk title - we had to drop our golden nuggets in our favoured hat!):

And with that it was off in our various directions for the evening...

And with that it was off in our various directions for the evening...

No comments:

Post a Comment